Contents

Key Takeaways

TL;DR

A staggering 82% of candidates say they would use AI assistance during technical interviews if they thought employers wouldn't detect it. As a founder or CTO of a software development company, you're likely already dealing with this.

A single bad hire costs organizations over $50,000 in direct losses. Here are the most practical, proven ways to catch cheaters and hire genuinely skilled engineers - including how platforms like Utkrusht AI are making traditional cheating structurally irrelevant.

Key Takeaways:

Stop assessing algorithm memorization - start assessing real problem-solving in real codebases

Use explanation-based follow-ups after every coding task to expose AI dependency

Behavioral AI proctoring catches patterns that screen sharing alone will always miss

Allowing monitored AI use reveals more about true skill than banning it

Watch-them-work assessments make cheating impractical, not just against the rules

Why Catching Cheaters in Tech Interviews Has Become a Real Problem

You post a job opening, 150+ applications land in 48 hours, you run coding tests, and bring someone on - only to find they can't debug a basic API without AI writing every line.

Candidates clear assessments, explain system architecture, and pass every round, then join their first sprint and struggle to write a basic function. This isn't rare anymore. It's a pattern in 2026.

Fabric's analysis of over 50,000 candidates found cheating adoption more than doubled from 15% in June 2025 to 35% by December 2025. Modern cheating tools integrate directly with the OS graphics pipeline, using DirectX overlays on Windows and Metal framework layers on macOS to render answers visible only on the candidate's local display.

When the candidate shares their screen via Zoom, Teams, or Google Meet, the conferencing software captures everything beneath the overlay while the overlay itself stays invisible.

For small and mid-sized engineering teams, this wastes 30% of hiring loop time, produces bad hires, and stalls product momentum.

This is precisely the challenge Utkrusht AI was built to address from the ground up - replacing flawed screening methods with a "Watch-them-Work" model that exposes genuine skill under real working conditions.

1. Watch the Coding Process, Not Just the Output

One of the most reliable easy ways to catch cheaters using AI during tech interviews is watching how someone codes, not just what they produce.

When hiring managers compared only final code samples from AI vs. real engineers, distinguishing them was nearly impossible - a coin flip. But watching the coding process made the difference clear.

Real engineers don't write code linearly; they move around, rename variables, and test edge cases. These human behaviors make AI usage far easier to spot. This insight is central to Utkrusht AI's "Watch-them-Work" assessment methodology, which evaluates candidates on their full problem-solving workflow, not just final output.

What to Look For During Live Sessions

Blocks of code appearing all at once instead of typed progressively

Eyes shifting to the side repeatedly, as if reading another screen

Pauses and filler words like "Hmm," which may signal waiting for AI-generated text to load

Answers that sound polished but don't connect logically to the question

2. Ask Candidates to Explain Their Own Code

This is one of the simplest, most effective detection methods - and requires no extra technology.

Asking candidates to explain their solution exposes everything. Someone who wrote the code will walk through their logic easily. Candidates who copied from an AI hesitate, struggle with explanations, or can't make necessary modifications.

Engineering leaders using Utkrusht AI's watch-them-work tasks consistently report the same finding: candidates who can't explain their own resume entries struggle even harder justifying live decisions during actual tasks.

As one engineering leader put it, most candidates couldn't even explain their own projects - Utkrusht AI's 20-minute real-job assessments cut through that immediately.

How to Structure Explanation-Based Follow-Ups

"Walk me through why you made this specific tradeoff." A genuine engineer has a reason. A cheater stalls.

"What would break if the dataset was 10x larger?" Tests real performance understanding, not memorized answers.

"If you had to rewrite this differently, what would you change first?" Real engineers have opinions. Cheaters don't.

3. Use Watch-them-Work Assessments Instead of Algorithm Puzzles

If candidates can cheat their way through your technical assessment using AI, your assessment might be measuring the wrong things. Many hiring teams give candidates generic academic algorithm questions - the kind LLMs have been trained on extensively - then act surprised when candidates use those same LLMs to answer expertly.

The smarter approach is giving candidates actual work tasks they'd face on day one. Similar to how Utkrusht AI replaces abstract puzzles with real-watch-them-work tasks - debugging APIs, optimizing database queries, and refactoring production code - the goal is exposing genuine, hands-on understanding. When a candidate has to fix a Docker container on an EC2 server, no AI overlay can substitute for real skill.

Real-Task Examples That Expose Skill Gaps

Instead of asking why SQL reads get slow, make the candidate connect to an actual SQL database, add indexes, and confirm the latency improvement

Instead of asking about design patterns, make them implement dependency injection with Guice and write unit tests for it

Instead of asking about Docker internals, make them fix a broken Docker setup on an EC2 server

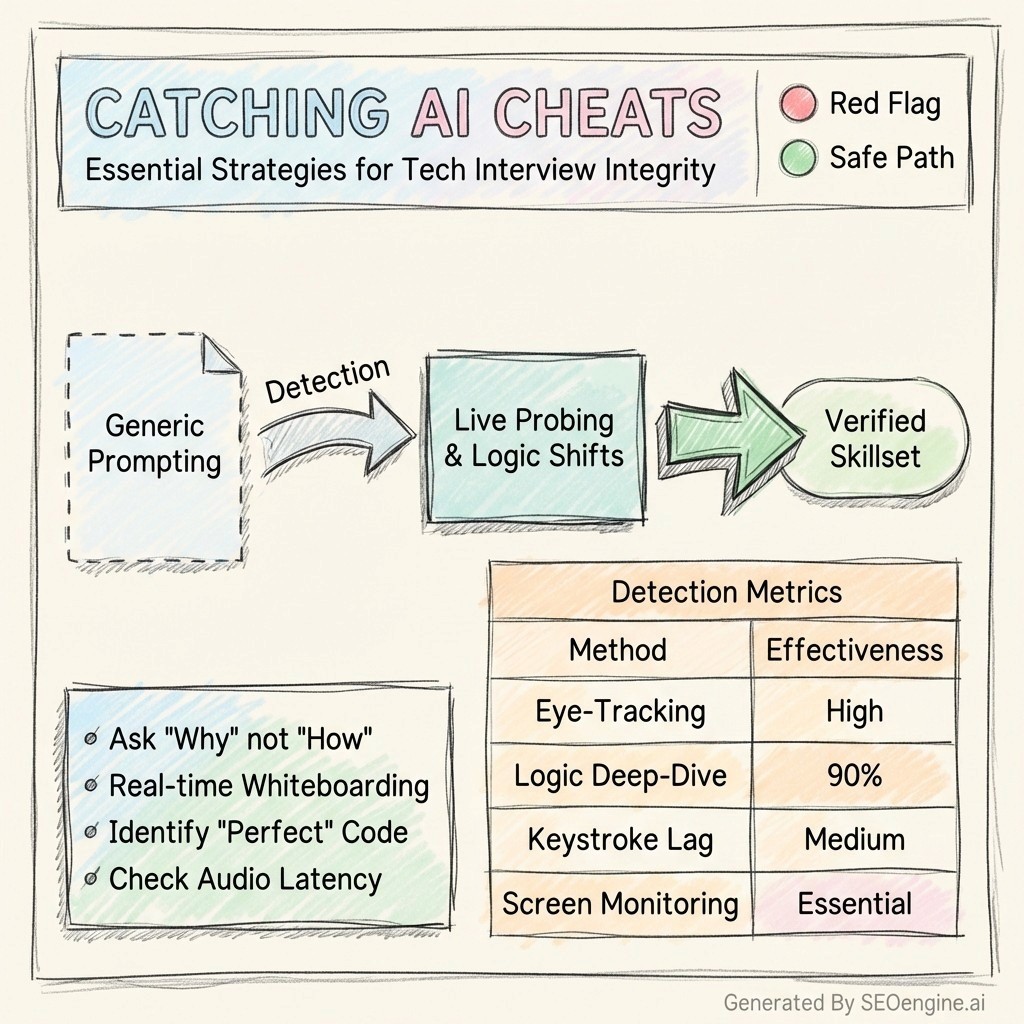

4. Use AI-Powered Proctoring to Monitor Behavioral Signals

For remote technical screenings at scale, behavioral monitoring is now a standard defense.

Modern proctoring platforms analyze behavioral and technical signals: detecting AI-generated code patterns and similarity anomalies, monitoring tab switching and browser behavior, analyzing typing speed and keystroke consistency, flagging unusual eye movement, and identifying voice modulation irregularities.

These systems use multimodal machine learning to model natural vs. adversarial interaction patterns. Detection accuracy has reportedly risen from approximately 85% to over 97%.

Proctoring Methods Compared

Method | Catches Overlay Tools | Catches Proxy Interviews | Works Remotely | Candidate Experience |

Screen sharing only | ✗ | ✗ | ✅ | Good |

Tab monitoring | ✗ | ✗ | ✅ | Neutral |

Behavioral AI proctoring | ✅ | Partial | ✅ | Neutral |

Watch-them-work | ✅ | ✅ | ✅ | Good |

In-person interview | ✅ | ✅ | ✗ | Variable |

5. Let Candidates Use AI - Then Assess How They Use It

This is the most forward-thinking approach, and it's where the best hiring teams are headed.

Companies like Google and Amazon encourage engineers to use AI daily - Google has publicly shared that a significant portion of its codebase now includes AI-generated code - yet interviews often ban those same tools.

Instead of banning AI, measure how well a candidate uses it. Does the engineer understand what AI generated? Can they spot errors in AI-produced code? Can they extend the output for a specific use case? Preventing engineers from using AI in interviews may actually hamper hiring of engineers who can adapt and grow with new technologies.

Utkrusht AI's platform operationalizes this directly: candidates use all available tools, including AI, and the platform tracks how they use them. Full session recordings show hiring managers exactly where AI was used - whether for helpful guidance or direct copy-paste - giving a genuinely transparent picture of skill and judgment.

This transparent, contrarian approach to AI usage is one of the defining principles behind Utkrusht AI's philosophy: don't restrict the tools engineers use every day, but document and evaluate how thoughtfully they use them.

Comparison: Traditional vs. Watch-them-Work Assessments

Feature | Traditional Coding Tests | Watch-them-Work Tasks |

AI cheating risk | ✗ Very high | ✅ Low |

Predicts actual job performance | ✗ Weak | ✅ Strong |

Tests real workflow | ✗ No | ✅ Yes |

Candidates can memorize answers | ✗ Yes | ✅ No |

Candidate completion rates | ✗ Low (45-60 min tests) | ✅ High (20-min tasks) |

Scales across 200+ skills | ✗ Limited | ✅ Yes |

6. Verify Identity and Watch for Proxy Interviews

Some recruiters estimate that in certain hot tech job markets in 2023-24, up to 10-15% of remote interviews involved some form of third-party assistance or impersonation.

Proxy interviewing places a qualified expert answering questions while a less-qualified candidate sits on camera, using high-fidelity audio software to relay answers while the candidate simply moves their lips.

Practical Ways to Detect Proxy Interviews

Require a government-issued ID at the start of any video assessment

Ask spontaneous, unscripted follow-up questions requiring real-time reasoning

Change topics abruptly mid-conversation to observe natural vs. coached response timing

Use multiple short sessions instead of a single long one - harder to sustain a proxy arrangement

Comparison: Cheat Detection Techniques

Technique | Difficulty to Implement | Detection Rate | Cost |

Ask candidates to explain code | ✅ Easy | ✅ High | ✅ Free |

Behavioral proctoring tools | Moderate | ✅ High | Moderate |

Watch-them-work method | Moderate | ✅ Very High | Moderate |

In-person interviews | ✗ Hard (logistics) | ✅ Very High | ✗ High |

AI usage monitoring | Moderate | ✅ High | Moderate |

Screen sharing only | ✅ Easy | ✗ Low | ✅ Free |

Key Takeaways

Cheating adoption more than doubled between June and December 2025, making reactive measures insufficient

Watching the coding process in real time reveals more than reviewing final output alone

Asking candidates to explain their solutions remains one of the most reliable free detection methods

Real-job tasks naturally resist AI cheating by requiring contextual judgment, not memorized answers

Allowing monitored AI use gives a truer picture of a candidate's actual engineering skill set than blanket bans

Frequently Asked Questions

How do I know if a candidate is using AI during a remote coding test?

Observe behavior: eyes wandering to the side, reflections of other apps in candidates' glasses, and answers that sound rehearsed or don't match the question asked. Asking candidates to walk through their logic verbally is the fastest in-the-moment check.

Is it even worth trying to ban AI in tech interviews?

Banning AI is increasingly difficult to enforce. One tech leader suspected 80% of their candidates used LLMs on top-of-funnel code tests, despite being explicitly told not to. A better investment is redesigning assessments so AI assistance alone can't produce a passing result.

What does a "watch-them-work" assessment actually look like?

It means giving candidates tasks they'd face in their first week. Instead of abstract algorithm questions, give them a real codebase with a bug to fix, a query to optimize, or a feature to implement - with access to all tools they'd normally use, including documentation and AI assistants.

How much does a bad hire actually cost my company?

A single bad hire costs organizations over $50,000 in direct losses, not counting months of lost productivity, rework, and the cost of rerunning the hiring process. For small engineering teams, one wrong hire can stall an entire product initiative.

Can AI proctoring tools catch modern cheating tools like Cluely or Interview Coder?

Modern cheating tools use GPU-level overlays that render answers beneath the layer captured by video conferencing software - the candidate sees answers while the interviewer sees only a clean workspace. Behavioral AI proctoring, which analyzes response timing and coding rhythm, is far more effective than screen sharing alone.

How does allowing AI use during assessments help catch cheaters?

When you let candidates use AI freely, then ask them to explain, modify, or extend the output, you instantly separate skilled engineers from those entirely dependent on the tool. Genuine engineers critically assess what AI generates. Cheaters cannot.

How quickly can I expect results from switching to watch-them-work based assessments?

Teams that shift to such tasks typically see faster shortlisting, fewer wasted interviews, and more confident hiring decisions within the first two to three hiring cycles. The process filters for demonstrated skill rather than interview performance - a much stronger predictor of on-the-job success.

Conclusion

Catching cheaters in tech interviews is no longer just about adding a proctoring tool or banning ChatGPT. The most effective approach is making cheating structurally irrelevant by assessing candidates in ways that demand genuine understanding - real tasks, live explanation, and monitored AI use.

For small or mid-sized engineering teams, every bad hire costs time, money, and momentum you can't afford. Most of the methods above cost nothing. Start by asking your next candidate to explain their solution out loud, step by step.

You'll know within 2 minutes whether you're talking to a real engineer or someone reading off a script.

Utkrusht AI's results across more than 15000 candidates assessed confirm that proof-of-work hiring is not just possible - it's practical. The platform delivers a verified top-10 shortlist within 48 hours from candidates who have already proven their skills in conditions mirroring the actual job.

That's the standard every hiring team should be working toward: not just filtering out cheaters, but building a process where only genuine skill can get through.

Start your next hiring cycle by replacing one coding test with a real task - and watch how quickly your shortlist quality improves.

Founder, Utkrusht AI

Ex. Euler Motors, Oracle, Microsoft. 12+ years as Engineering Leader, 500+ interviews taken across US, Europe, and India

Want to hire

the best talent

with proof

of skill?

Shortlist candidates with

strong proof of skill

in just 48 hours